First, most of the studies that employed them were based on the summation or ratio algorithm of frequency. There are several potential limitations regarding these measures and methods. Meanwhile, they also leave much room for further exploration. These studies contributed highly useful insights into the nature of L2 lexical/syntactic changes in general and of the development of L2 learners over time. Although research making use of these measures has helped to examine a number of important issues concerning L1 (the first language) and L2 acquisition, such as proficiency assessment, language development, and language teaching/learning, the methods for enumerating linguistic components and calculating their relative ratios make use of the available information. In general terms, the calculation of these measures boils down to counting the number of linguistic components (e.g., words, dependent clauses, complex nominals, etc.) and the number and types of connections between these components. Hundreds of complexity measures have been developed and used in previous investigations. Linguistic complexity functions as a basic descriptor of L2 performance and as indicative of L2 proficiency and development in such research. Complexity measures have been employed to gauge L2 proficiency and its development at multiple levels of linguistic representation such as lexis, morphosyntax, discourse, and psycholinguistics.

In recent years, a popular means of quantitatively measuring L2 proficiency has been to look at the linguistic features of the learners’ L2 production from the point of view of complexity, which is one aspect of the Complexity, Accuracy, and Fluency (CAF) framework for learner language analysis. To this end, in the following, both qualitative and quantitative methods have been employed, such as face-to-face interviews, standardized tests, and linguistic feature analysis and modeling.

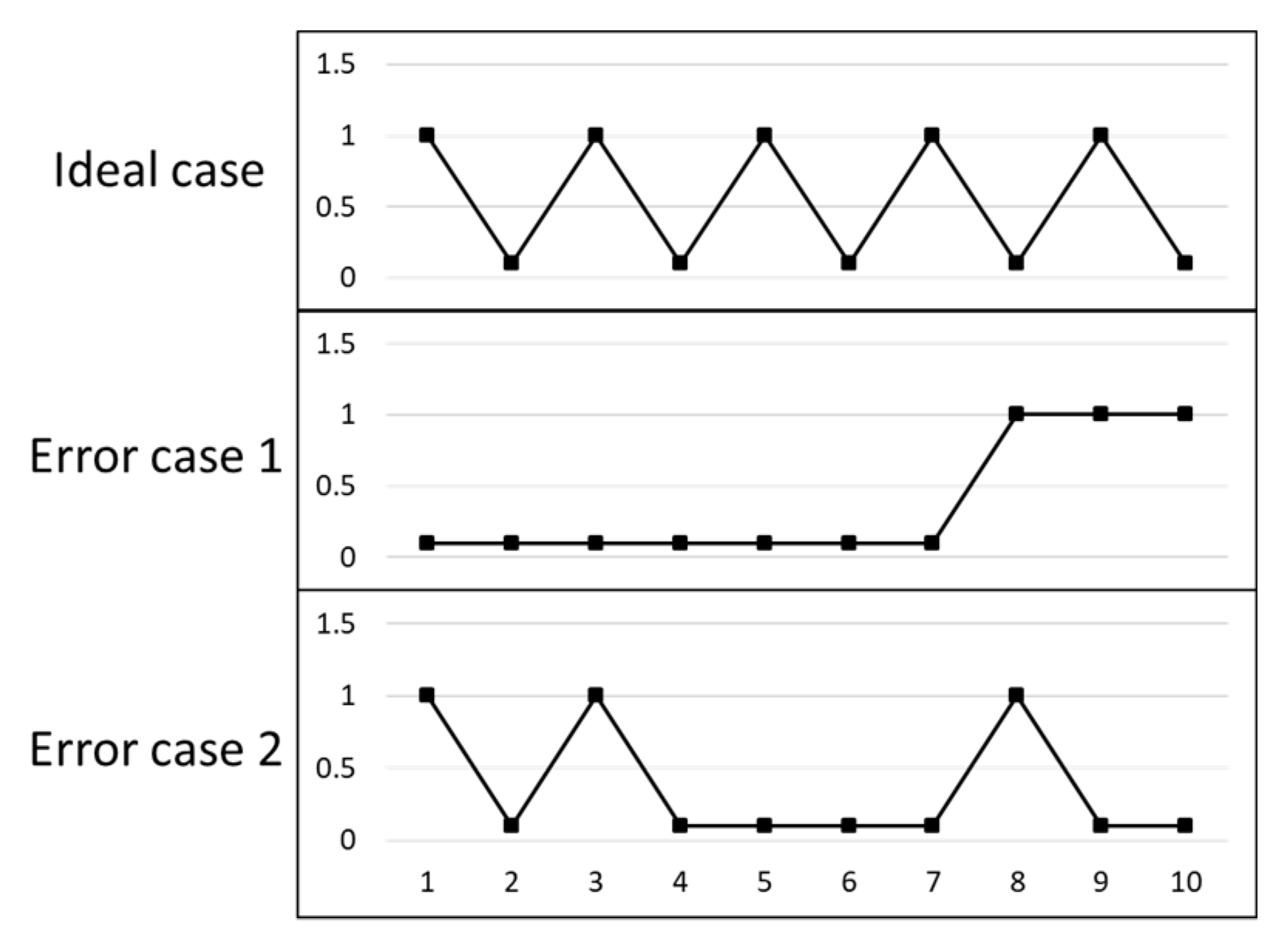

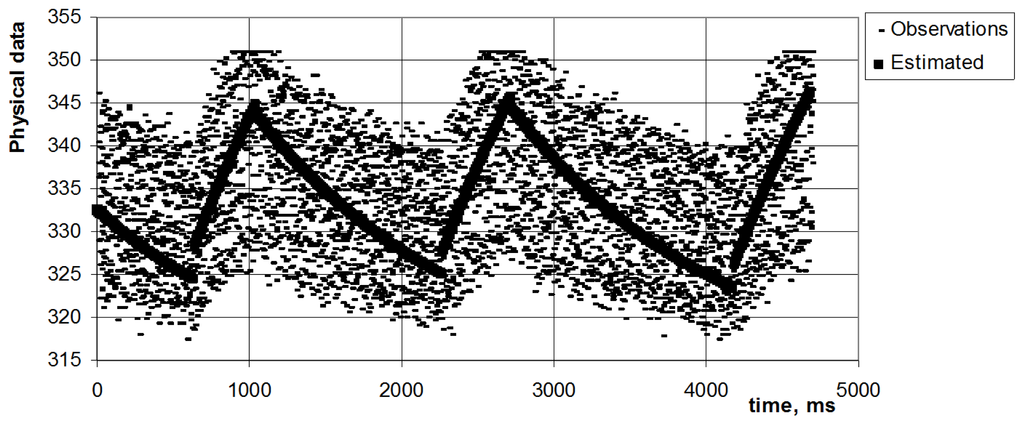

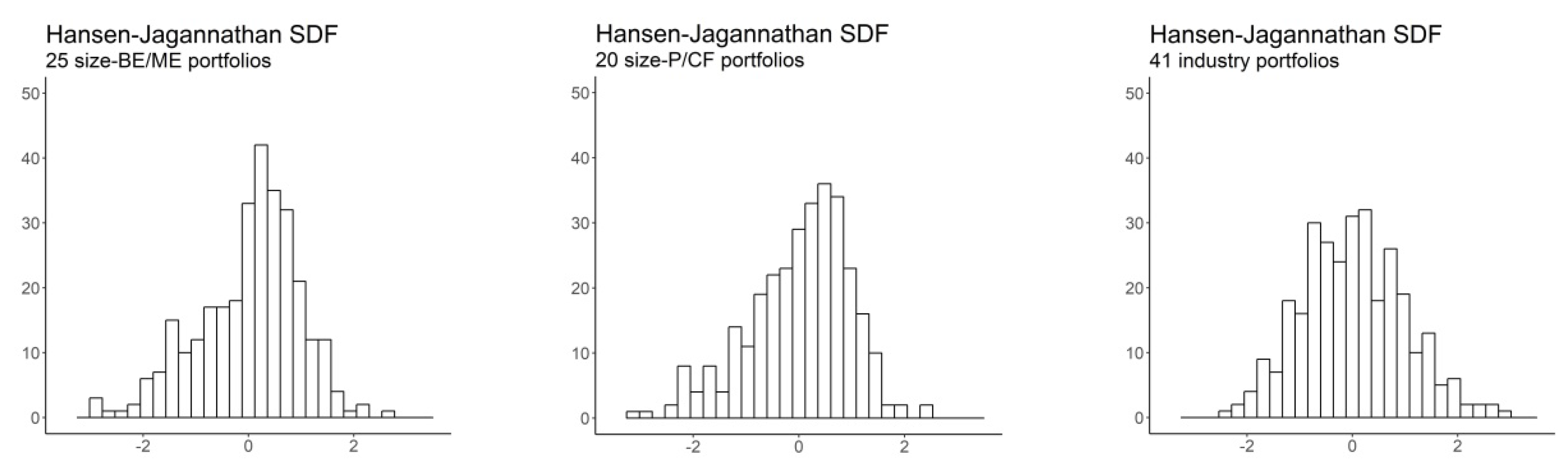

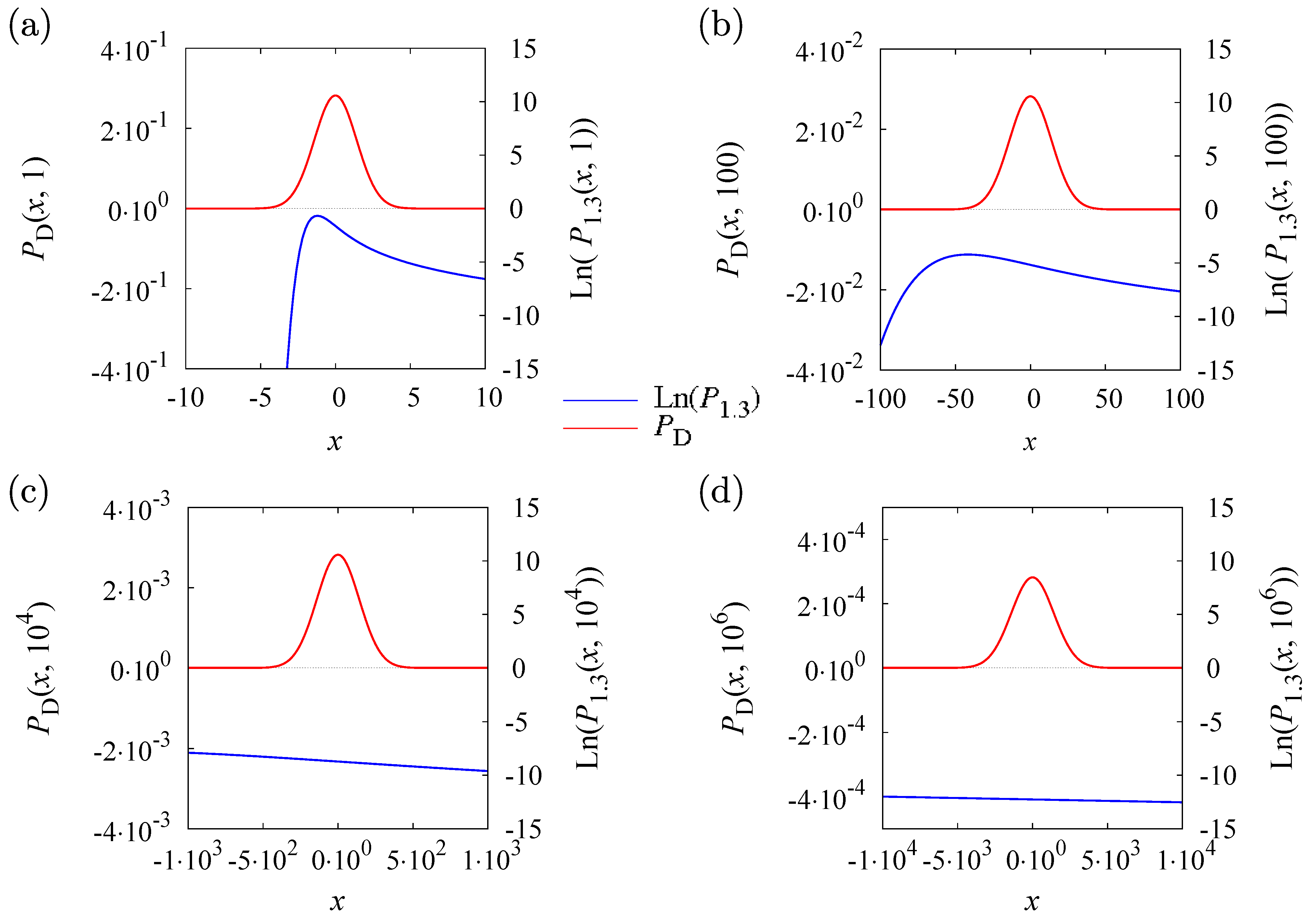

Measuring learners’ second language (L2) proficiency is an important issue not only as regards practical language teaching, but also with respect to research on L2 acquisition. In this sense, we have developed an effective and practical algorithm for stably detecting and predicting the developments in L2 learners’ language proficiency. As compared to the algorithms in traditional approaches, relative entropy performs much better in detecting L2 proficiency development. However, the results from the traditional methods rarely show regularity. Meanwhile, this study uses the statistics method of time series to process the data on L2 differences yielded by traditional frequency-based methods processing the same L2 corpus to compare with the results of relative entropy. This result is consistent with the assumption that in the course of second language acquisition, L2 learners develop towards a more complex and diverse use of language. The results show that information distribution discrimination regarding lexical and grammatical differences continues to increase from L2 learners at a lower level to those at a higher level. We chose lemma, token, POS-trigram, conjunction to represent lexicon and grammar to detect the patterns of language proficiency development among different L2 groups using relative entropy. This study applies relative entropy in naturalistic large-scale corpus to calculate the difference among L2 (second language) learners at different levels.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed